microGPT Foundations

The Karpathfinder

Inspired by one of the OGs of deep learning.

An unofficial microGPT course for learning GPTs from first principles.

Andrej Karpathy showed the world that you can understand neural networks by building them from scratch — one line at a time.

This course follows that philosophy. You'll walk through a tiny GPT implementation, understand every block, and build your own variant.

No hand-waving. No black boxes. Just code, math, and clarity.

Founding: $695 Core / $1,095 Verified

One-time payment · Lifetime access · 14-day refund policy

~ 200 lines. That's the whole model.

The Problem

You're still treating GPTs like magic.

Most people use LLMs every day without understanding a single line of how they work.

You've watched the YouTube explanations, but the code still feels opaque.

You can call an API, but you can't reason about what's happening inside.

You know the buzzwords — attention, embeddings, transformers — but not the mechanics.

You hesitate to modify model code because you don't trust your own understanding.

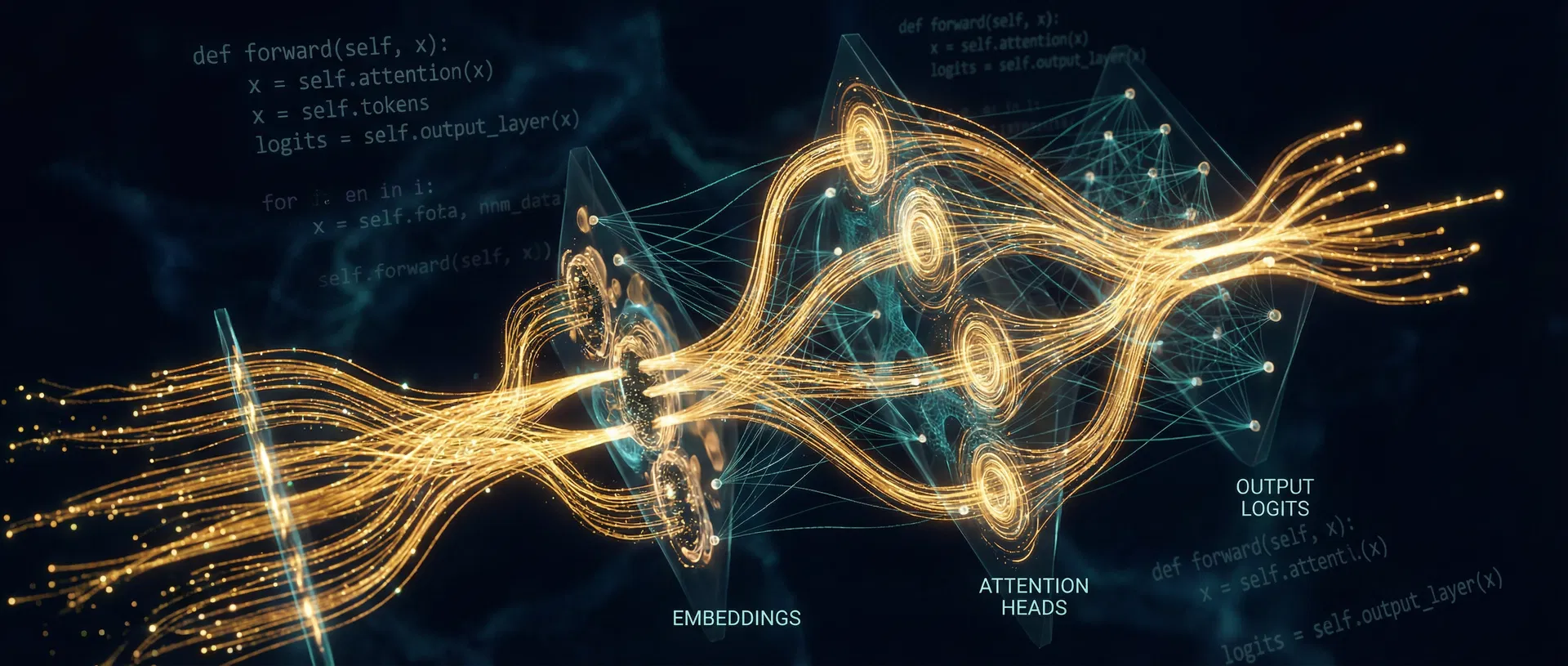

The Mechanism

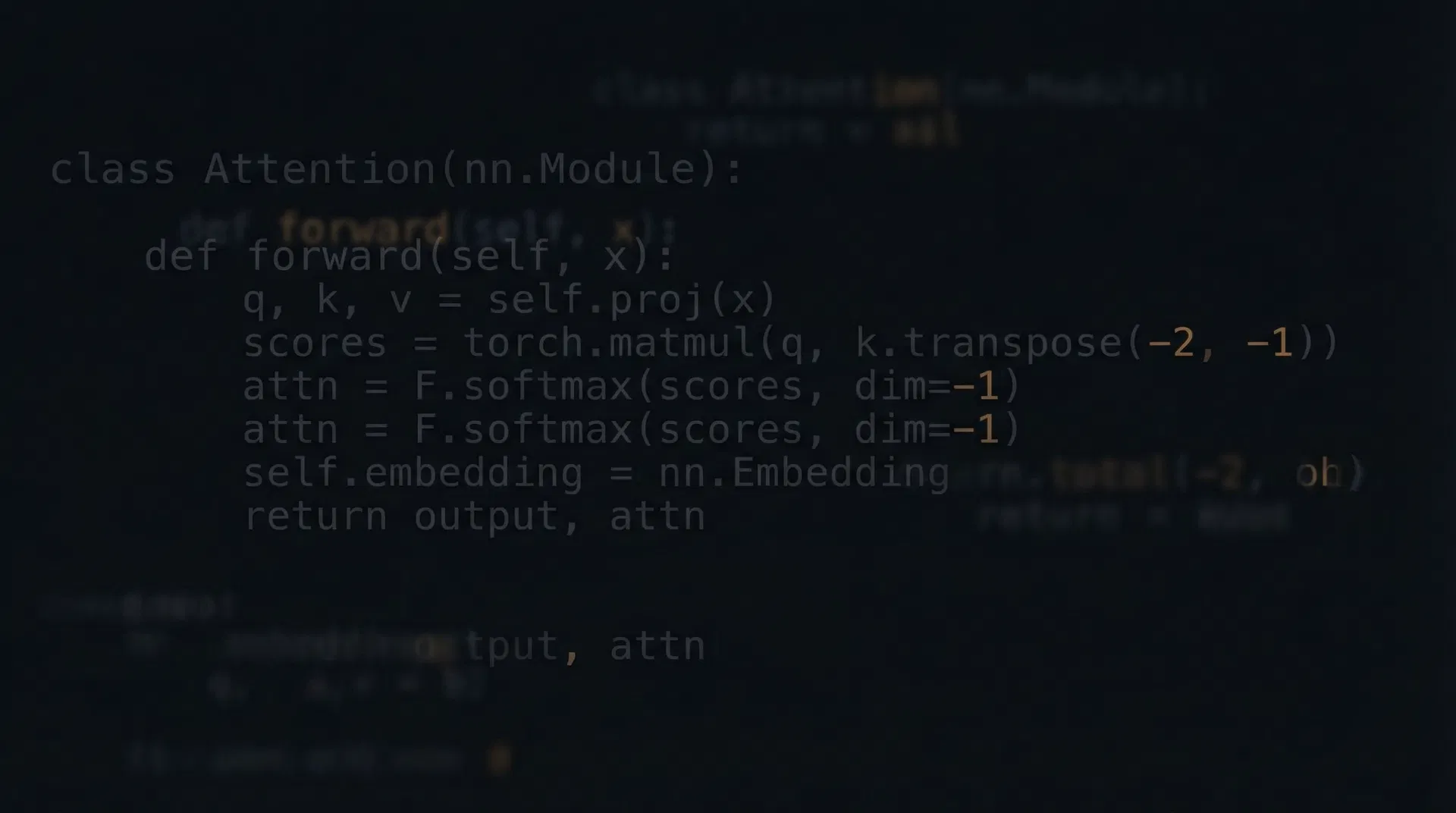

One tiny codebase. Complete understanding.

Inspired by Karpathy's approach: build it yourself, understand everything.

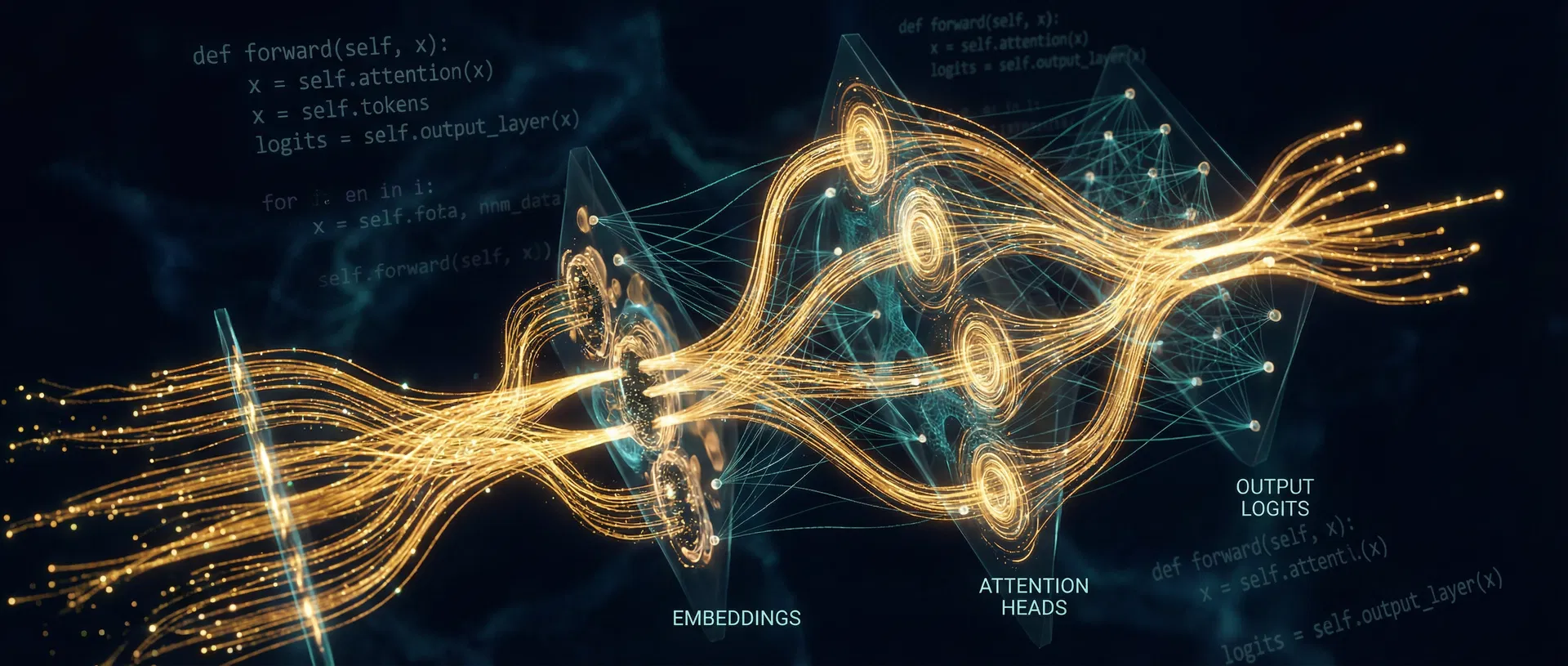

You'll follow the complete data path through a working GPT implementation:

By the end, you won't just "kind of get transformers."

You'll be able to open the file, point at any line, and explain exactly what it does and why it's there.

The Trail Map

Follow the path from tokens to logits.

Seven modules. One codebase. Each checkpoint builds on the last until you can explain, modify, and build your own tiny GPT.

Trailhead

Orientation, prerequisites, and what a GPT is actually trying to do. Set up your environment and understand the landscape before the hike begins.

Learner understands the project scope, has a working environment, and can articulate what next-token prediction means at a high level.

Token Trail

Documents, BOS, vocabulary, tokenization, and next-token prediction. Understand what the model sees and what it's trying to predict.

Learner can explain what the model is predicting and why, trace from raw text to token IDs, and describe the vocabulary.

Gradient Gorge

Bigram intuition, loss functions, autograd, backpropagation, and learning dynamics. Where the actual learning happens.

Learner can explain where learning happens, how parameters update, and what the loss function is measuring.

Attention Pass

Embeddings, positional information, self-attention, residual connections, MLP blocks, and layer normalization. The transformer core.

Learner can trace a complete forward pass and explain every major component of the transformer architecture.

Optimizer Ridge

Adam optimizer, training loops, logits interpretation, sampling strategies, temperature, and inference. From training to generation.

Learner can configure training, interpret logits, and generate text with different sampling strategies.

Summit Project

Modify the model, swap the dataset or architecture, and build your own tiny GPT variant. Ship something real.

Learner ships a working variant and can defend every modification they made.

Beyond the Map

What scales from tiny GPTs to real-world systems, and what changes in production. The bridge from learning to building.

Learner understands the gap between microGPT and production systems, and knows where to go next.

The Transformation

From black box to builder.

Before

- Buzzwords without mechanism

- Tutorials without intuition

- API usage without understanding

- Confidence without competence

After

- Explain every major block in a transformer

- Trace the full forward pass with confidence

- Modify architecture and understand the tradeoffs

- Build and ship your own tiny GPT variant

What's Included

Everything you need to follow the path.

7-Module Curriculum

From tokens to sampling, every concept built on the last

Gated Member Portal

Track your progress, complete quizzes, build your understanding

Quizzes & Articulation Checks

Prove you understand — don't just watch and nod

Capstone Project

Build your own GPT variant and defend your choices

Certificate of Completion

A verifiable credential that proves real understanding

Private Community

Learn alongside other builders in our Circle community

Lifetime Updates

As the field evolves, so does the course

Free Lesson Preview

Try before you commit — no credit card required

Referral Rewards

Share the path and earn rewards

Built For

Developers

Who want to understand what's actually inside the models they use every day

ML Aspirants

Building first-principles intuition before diving into larger frameworks

AI Builders

Shipping products on top of LLMs who need to understand the engine

Technical Creators

Who teach, write, or explain AI and want to go deeper than surface-level

Not For You If

You want high-level summaries without touching code

You refuse to read Python or work through implementations

You want passive entertainment, not active mastery

You think understanding means watching someone else understand

The Philosophy

Inspired by the way the OGs teach.

Andrej Karpathy changed how people learn neural networks. Instead of starting with theory and working down, he starts with code and works up. Build it, run it, break it, understand it.

The Karpathfinder follows that same philosophy. You won't watch lectures about transformers — you'll build one. A tiny, readable GPT that fits in a single file. Every line explained. Every block justified.

"What I cannot create, I do not understand." — Richard Feynman

Your Guide

Lloyd Clark

[Instructor bio — add your background, credentials, and what drives you to teach this material. Keep it honest and specific. No fake credentials.]

[email protected]14-Day Clarity Guarantee

If the first two modules don't create genuine clarity about how a tiny GPT works, email us and we'll refund your purchase. No questions, no hoops. We're confident in the path.

Choose Your Path

Pricing

One-time payment. Lifetime access. No subscriptions, no upsells, no surprises.

Free Lesson

Try the first lesson and diagnostic quiz before you commit.

- First lesson on tokens & prediction

- Diagnostic quiz

- No credit card required

The Karpathfinder Core

founding price

The complete path from tokens to logits, with lifetime access.

- Full 7-module curriculum

- Lifetime access & updates

- Private Circle community

- Quizzes & articulation checks

- Capstone project

- Certificate of completion

Core + Verified

founding price

Everything in Core, plus a graded assessment and verified credential.

- Everything in Core

- Graded final assessment

- Enhanced verified certificate

- Public verification page

- Shareable badge pack

Teams

MicroGPT foundations for technical teams that need shared understanding.

- Up to 10, 25, or 50+ seats

- Verified certificates included

- Admin reporting dashboard

- Onboarding & kickoff support

- Invoice-based purchasing

Questions

Frequently Asked Questions

Ready to follow the path?

Stop treating GPTs like magic. Start understanding them from first principles — the way the OGs intended.